I created the PowerShell module AzPolicyTest (GitHub, PowerShellGallery) back in 2019. This module provides a list of Pester tests can be used to validate the Azure Policy and Initiative definitions. It can also be used in your IaC pipelines. I have previously blogged about this tool in the blog post Deploying Azure Policy Definitions via Azure DevOps (Part 2).

The initial version 1.0 was developed using Pester v4. It has been 5 years and it has stopped working long time ago due to the breaking changes introduced in Pester v5. On the other hand, a lot of new capabilities have been introduced in Azure Policy since 2019. I have been wanting to update this module for a long time, but I just never got around to it.

My intention for creating this module is to collect all the best practices and lesson learned from the field and put them into a set of Pester tests. I wanted to make it easy for anyone to validate their policy definitions and initiatives before deploying them (the shift-left approach). Some of these tests addressed issues that a normal Bicep template validation would not catch and are can only be identified during the deployment phase (for example, the mode value is case sensitive and it must be using the pascaleCase format? All and Indexed are accepted but all or indexed are not?).

I have manage to spend a day to update the module to use Pester v5 and also added a few new tests to cover some of the new Azure Policy capabilities. The new version 2.0 has been released to PowerShell Gallery. You can install it using the following command:

Install-Module -Name AzPolicyTest -Force

You can use the following commands to run the tests:

Invoke tests for Policy Definitions:

#import the module if required

import-module AzPolicyTest

# Test a single policy definition file without generating the test results output file

Test-AzPolicyDefinition -Path "path-to-policy-definition-json-file.json" -OutputFile "C:\Temp\MyTestResult.xml"

# Test all policy definition json files in the directory and sub directories and store the test results in a file

Test-AzPolicyDefinition -Path "directory-path" -OutputFile "./policy.tests.xml"

Invoke tests for Policy Initiatives:

#import the module if required

import-module AzPolicyTest

# Test a single policy initiative file without generating the test results output file

Test-AzPolicySetDefinition -Path "path-to-policy-definition-json-file.json" -OutputFile "C:\Temp\MyTestResult.xml"

# Test all policy initiative json files in the directory and sub directories and store the test results in a file

Test-AzPolicySetDefinition -Path "directory-path" -OutputFile "./policy.tests.xml"

What’s Included in AzPolicyTest v2.0

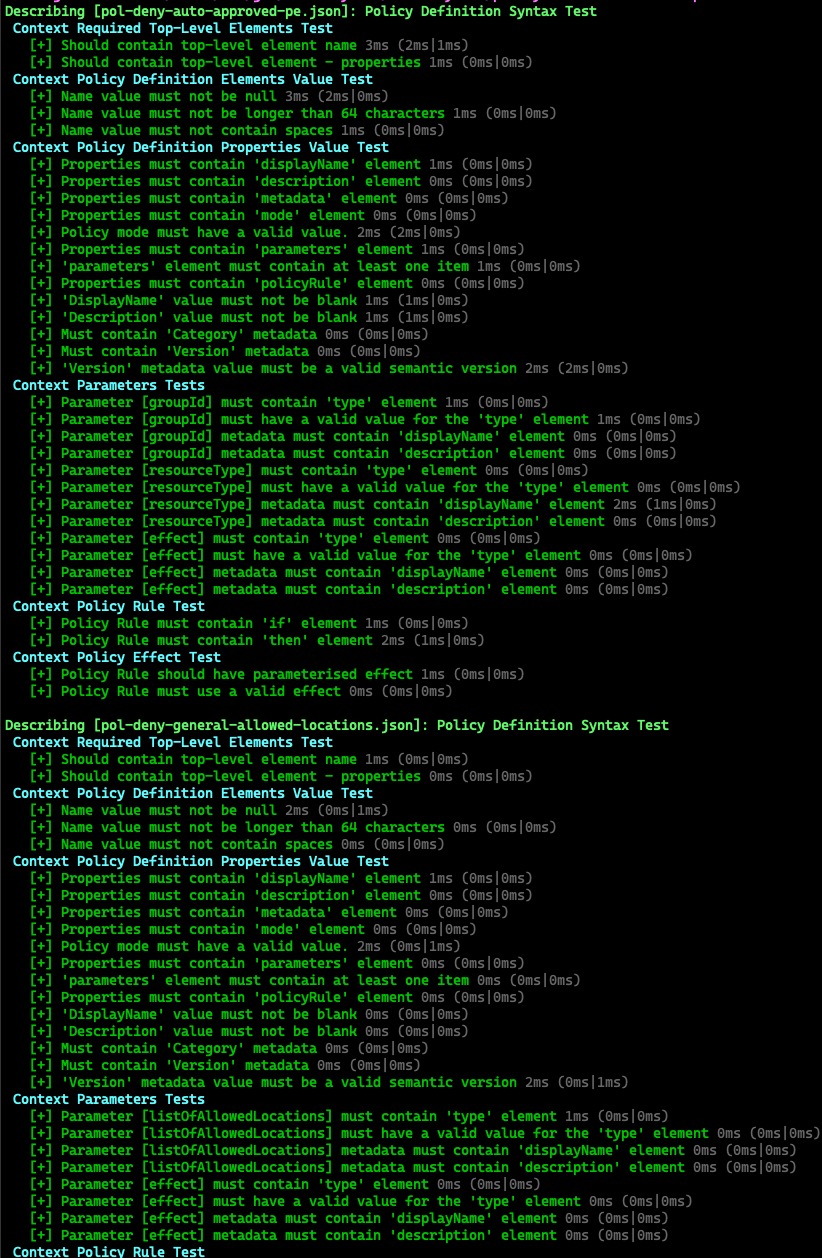

The following Tests are included in the module:

Policy Definition Tests:

- Policy definition

nameelement should exist - Policy definition

propertieselement should exist namevalue must not be nullnamevalue must not contain spacesdisplayNameelement should existdisplayNamevalue must not be nulldescriptionelement should existdescriptionvalue must not be nullmetadataelement should existmetadatamust containCategoryelementmetadatamust containversionelementversionvalue must be a valid semver versionmodeelement should existmodeelement must have a valid valueparameterselement should existparameterselement must have at least one itempolicyRuleelement should existpolicyRuleelement must have aifandthenchild element- The type for each parameter must be a valid data type

- Each parameter must have a display name and description

- The policy effect must be parameterised

- The parameterised policy effect should only contain valid effects

- The parameterised policy effect should contain

Disabledeffect as one of the allowed values - If the parameterised policy effect contains

Audit, then it should also containDenyand vice versa - The parameterised policy effect should have a default value

DeployIfNotExists,ModifyandAuditIfNotExistspolicy definitions should contain adetailselementDeployIfNotExistsandAuditIfNotExistspolicy definitions should contain aexistenceConditionelementDeployIfNotExistsandAuditIfNotExistspolicy definitions should contain aevaluationDelayelementDeployIfNotExistspolicy definition should contain adeploymentelementDeployIfNotExistspolicy should set the deployment mode toIncrementalDeployIfNotExistsandModifypolicies should contain aroleDefinitionIdselement- At least one role definition ID should be specified for the

DeployIfNotExistsandModifypolicies - The ARM template embedded in the

DeployIfNotExistspolicy should have a valid schema - The ARM template embedded in the

DeployIfNotExistspolicy should have a valid contentVersion - The ARM template embedded in the

DeployIfNotExistspolicy should have aparameters,variables,resourcesandoutputselements Modifypolicies should contain aconflictEffectelement and it must have a valid valueModifypolicies must have anoperationselement

Policy Set Definition (Initiative) Tests:

- Policy Initiative definition

nameelement should exist - Policy Initiative definition

propertieselement should exist namevalue must not be nullnamevalue must not contain spacesdisplayNameelement should existdisplayNamevalue must not be nulldescriptionelement should existdescriptionvalue must not be nullmetadataelement should existmetadatamust containCategoryelementmetadatamust containversionelementversionvalue must be a valid semver versionpolicyDefinitionselement must exist and must contain at least one itempolicyDefinitionGroupselement must exist and must contain at least one item- The type for each parameter must be a valid data type

- Each parameter must have a display name and description

- Each member policy must have the

policyDefinitionIdandpolicyDefinitionReferenceIdelements - The

policyDefinitionIdandpolicyDefinitionReferenceIdelements for each member policy must have a valid value - Each member policy must have a

parameterselement - Each member policy must have a

groupNameselement - The

groupNameselement for each member policy must have at least one item

Json File Tests:

- The specified value for the

pathparameter must contain at least one Json file - The Json file can be correctly parsed

Conclusion

I have also bumped the minimum required PowerShell version to v7.0.0 and Pester module version to v5.5.0. Therefore you will no longer be able to use this module in the legacy Windows PowerShell (v5) environment (and if you are still using Windows PowerShell, you should really stop doing that!).

I have in included few policy and initiative definitions that I used for testing the PowerShell module. you can find them in the GitHub repo under the test_definitions folder.

Lastly, I wanted to mention that I was thinking about adding additional tests to validate the policy definition json file against the official JSON schema for Azure policy definitions. However I quickly scrapped the idea due to few limitations:

- The latest official schema I was able to find was dated back to Oct 2020, and it was created using Json schema draft-4 format. Starting for the most recent PowerShell release (v7.4.1), the

Test-Jsoncmdlet has been updated to use the latest Json schema draft-7 format (breaking changes in PowerShell 7.4.1). Therefore the official schema for Azure policy definitions is no longer compatible with the latestTest-Jsoncmdlet. - The official schema only covered the

policyRulesection of the policy definition, and there is no schema for the policy initiatives. - The Json schema is useless when you have parameterised values (such as policy effects). For example, the effect value

[parameters('effect')]is not a valid value according to the schema, but it is a valid in the policy definition as long as the respective parameter is correctly defined.

If you have any suggestions or feedback, please feel free to raise an issue in the GitHub repo or each out to me on social media. I hope you find this module useful.

]]>