Deploying Containerized Socks5 Proxy Server Using ACR, ACI and Azure DevOps

Background

In certain parts of the world, some of the popular apps and services that I use daily are blocked by state-owned firewalls. Couple of years ago, before we went to that part of the world for family holiday, I looked into setting up proxy servers on the public cloud so we can actually use our Android phones when we are over there. One of my high school friends told me he’s using a popular Socks5 proxy server called Shadowsocks hosted on a GCP VM instance. Shadowsocks is a Linux based server, it is extremely easy to setup, and it provides client apps for Windows, OSX, Android (GitHub, Google Play) and iOS.

The reason he’s using GCP was because of the price. its cheap, with the free credit you get when you sign up, it can last you few months if you have chosen a small size VM.

However, it would be against my religion if I followed his footpath and setup mine on GCP. Before our holiday, I created 3 Ubuntu VMs running Shadowsocks in 3 separate Azure regions (Australia, Singapore and USA). It turned out these VMs was extremely helpful. We used them everyday when we were up there. We’d switch between servers to get better speed if required. Without these servers, my daughter couldn’t watch her favourite cartoon on Netflix or other streaming services on her iPad, and I couldn’t install required apps onto my phone from Google Play Store nor could I play an online game I was addicted to at that time. One day I was in the middle of the CBD of a very large city that I have never visited before, I needed to use the GPS and the map to take me to where I needed to go, Google Maps wouldn’t even load unless I connected my phone to one of my Shadowsocks instances.

After our holiday, I kept those servers running for a while. My friends from Australia used it few times when they travelled to that particular country, and my friends from that country used them to access websites such as YouTube, Facebook, Twitter, etc. In the end, I shut them down to cut down my Azure consumption. Having 3 D series VMs sitting there idle do cost a bit.

Since Shadowsocks is very lightweight, and does not keep any persistent data. I thought it would be a very good candidate to be containerized so I can cut down the cost and just keep them running. I had some spare time over the last couple of weekends, and I’ve decided to try to hosted it on Azure Container Instance. After spending 2 Sunday afternoons, I managed to get it deployed and hosted on Azure Container Registry (ACR), Azure Container Instance (ACI) using Azure DevOps YAML pipelines. To be honest, I was surprised how easy it was to make the container image for it, only took me around 15 minutes to create it from scratch and have it fully tested and running on Docker running on my Mac Mini. Most of my time was spent on designing the YAML pipelines and have sufficient tests and scanning in place.

I’m going to go through how I used YAML pipelines in Azure DevOps to deploy an Azure Container Registry, then building and pushing the docker image to ACR, and created 3 container instances in 3 different Azure regions to run this image. Although I am using 2 separate projects in Azure DevOps and all my code are stored on Azure Repo, I’ve made a copy of all the code I’ve developed and stored it on a public GitHub Repo here: https://github.com/TaoYang-cloud/containers.patterns

NOTE: Before we continue, let me set this straight first. The purpose of this post is really to demonstrate and share my experience of deploying a simple containerized app to Azure using Azure Pipelines. Hosting Internet based proxy servers is legal. We are all law-abiding citizens, don’t hold me responsible for your inappropriate use of proxy servers.

Pre-requisites

I’m using several Azure DevOps extensions in my pipelines. If you don’t want to use them, you can remove the steps in the pipeline:

- Microsoft Security Code Analysis: https://secdevtools.azurewebsites.net/

- Run ARM TTK Tests: https://marketplace.visualstudio.com/items?itemName=Sam-Cogan.ARMTTKExtension

Azure Container Registry Pipeline

Firstly, I created a pipeline to deploy an container registry to host the docker image. The pattern is located in the acr folder in the repo. The YAML pipeline deploys an ARM template, which contains an ACR and key vault (for storing ACR admin credential).

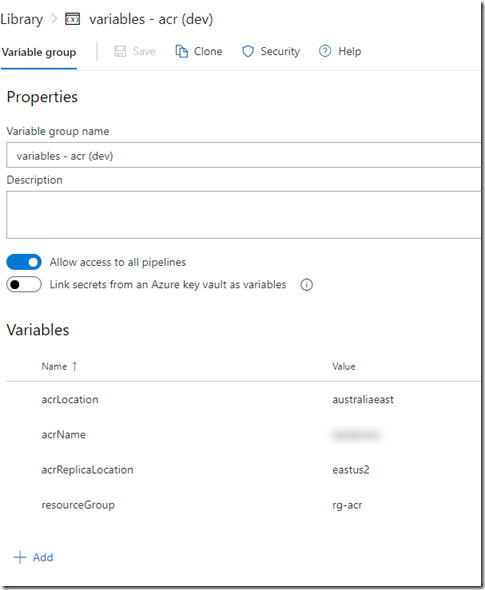

This pipeline uses several service connections for connecting to my Azure subscriptions (one for Dev and one for Prod). I named these connections sub-workload-dev and sub-workload-prod. It also uses 2 variable groups called “variables – acr (dev)” and “variables – acr (prod)”. the following variables are stored in these variable groups:

- acrLocation

- acrName

- acrReplicationLocation

- resourceGroup

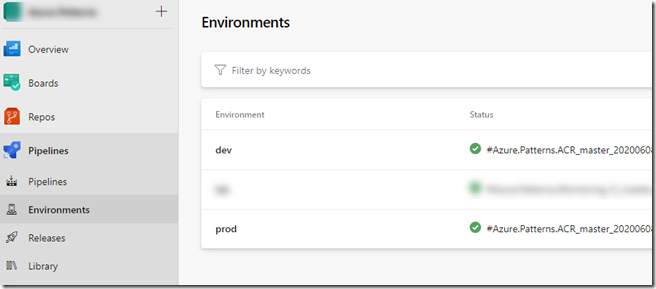

I also created 2 environments called “dev” and “prod” in my project, which is required for the YAML pipeline:

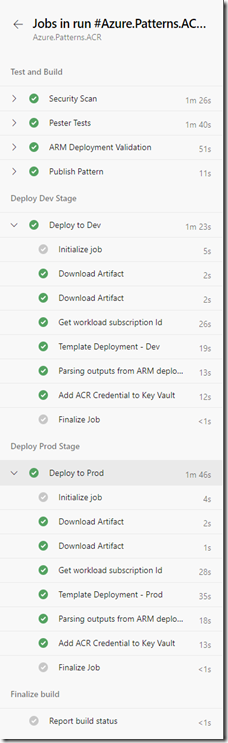

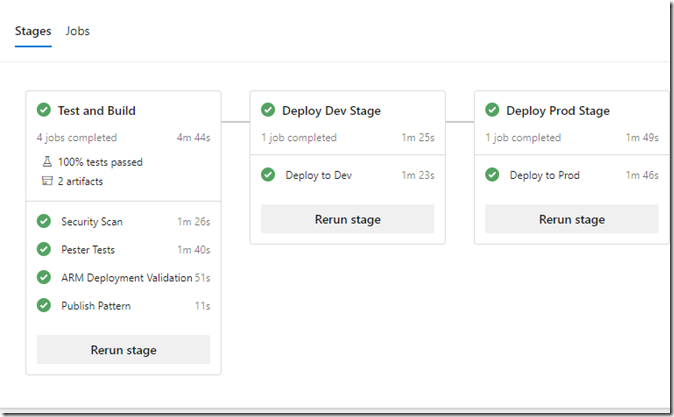

The pipeline contains the following stages:

|

Test and Build

|

This pipeline is pretty straightforward, once completed, I’m ready to continue with the second pipeline.

Docker Image

As part of the ACI pipeline, the docker image is built, scanned and pushed to ACR from the Dockerfile I’ve created. it basically performs the following steps:

- use base image Ubuntu 18.04 and install shadowsocks-libev using apt-get

- run apt-get upgrade

- clean up

- copy the shadowsocks config file config.json located in the same directory of the docker file to the image.

- configure the image to start shadowsocks-libev service when starts up

The config file controls various settings such as port mapping, encryption method, and password when connecting to the shadowsocks server. I don’t really consider the password here a secret because it’s generic that everyone who connect to my instance would use. I’d put a very simple phrase here so it’s not too hard for people to enter when setting up profile on their client apps.

Azure Container Instance Pipeline

The ACI pattern is located in the container/shadowsocks folder of my GitHub repo. Similar to the ACR pipeline, I needed to create some variable groups, service connections and environments to support the ACI pipeline:

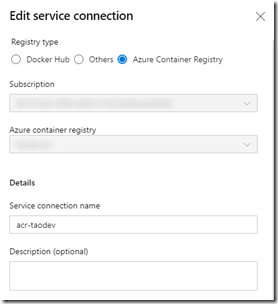

Service Connections:

- sub-workload-dev (Azure Resource Manager)

- acr-taodev (Docker Registry)

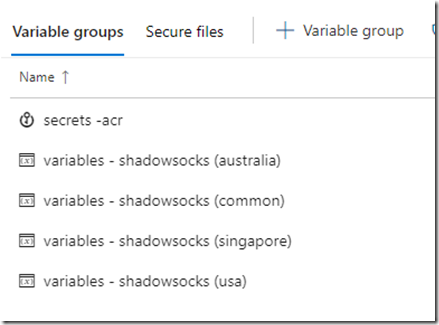

Variable Groups:

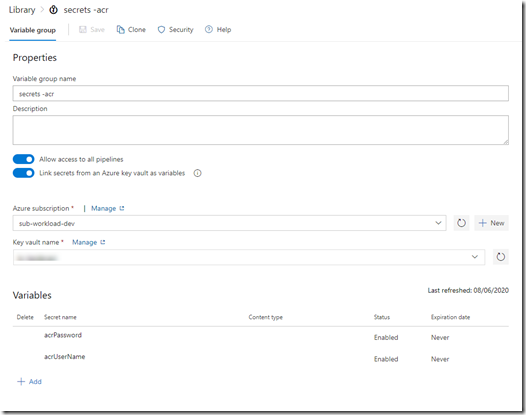

- secrets – acr (linked to the key vault created by previous pipeline and added the secrets acrUseName & acrPassword as variables)

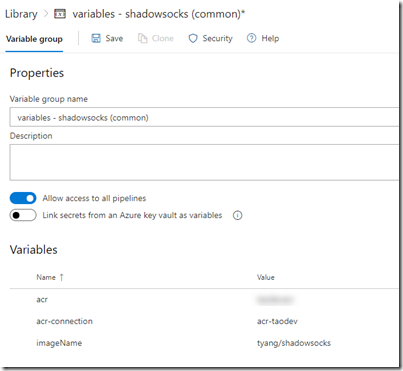

- variables – shadowsocks (common)

- acr (the name of the ACR created by previous pipeline)

- acr-connection (the name of the acr service connection)

- imageName

-

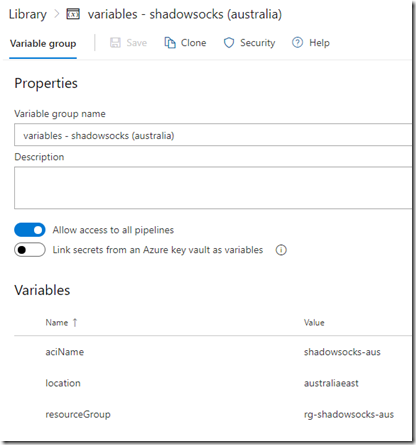

variables – shadowsocks (australia usa singapore) - aciName

- location

- resourceGroup

Environments:

- australia

- singapore

- usa

Unlike the ACR pipeline, I’m only deploying container instances to a single subscription (which is my dev subscription). I’m using different stages for each location. so I’m using environment to separate locations, instead of dev / prod environments.

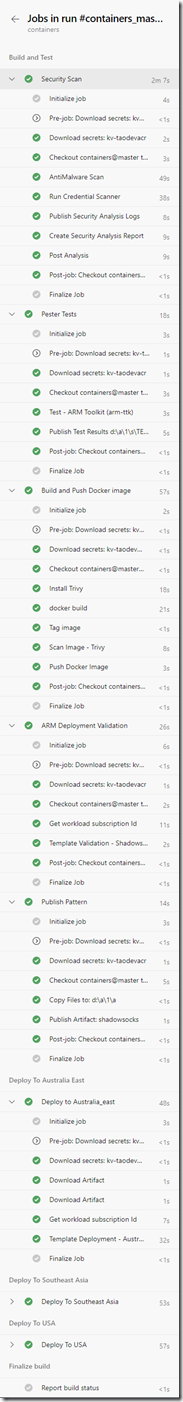

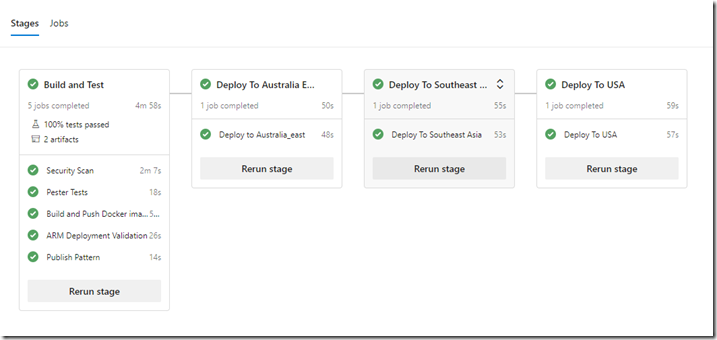

The pipeline performs the following tasks:

|

Build and Test

|

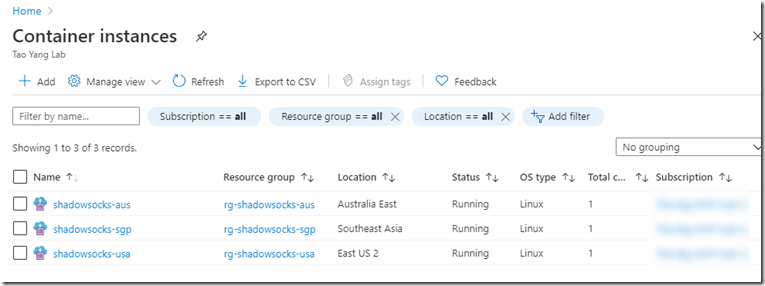

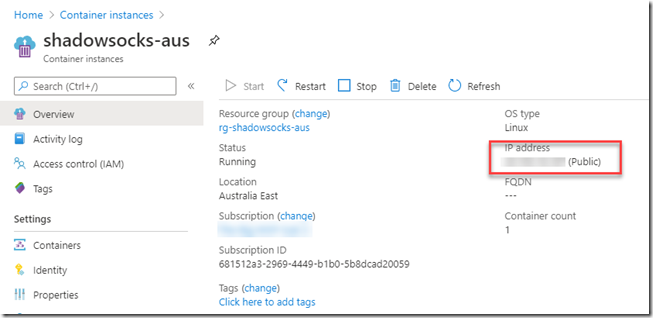

Once the pipeline is completed, I can get the the public IP address for each container instance from the Azure portal.

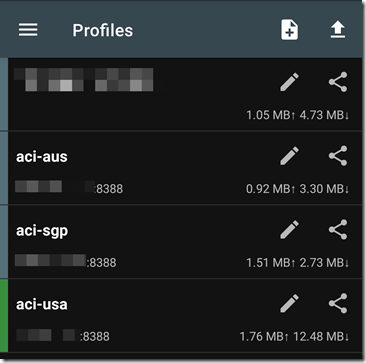

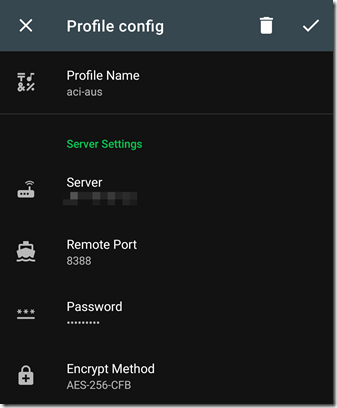

I can then configure my Shadowsocks client app using the public IP address assigned to the container group, and the password specified in the Shadowsocks config file:

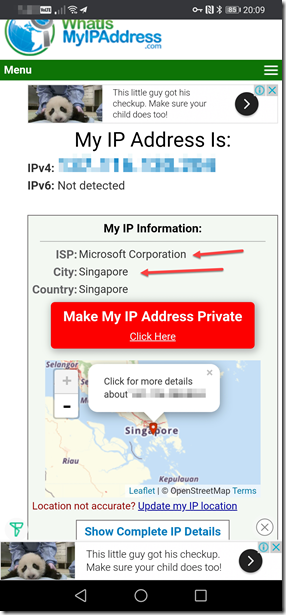

To test, I connected the Shadowsocks client to one of the profiles (i.e. the instance located in Singapore), and browsed to https://whatismyipaddress.com/. I can see the IP address is not my home broadband IP address (since I’m home right now and my phone is connected to the home wifi), and it’s located in Singapore, and belongs to Microsoft (since it’s running on Azure):

Conclusion

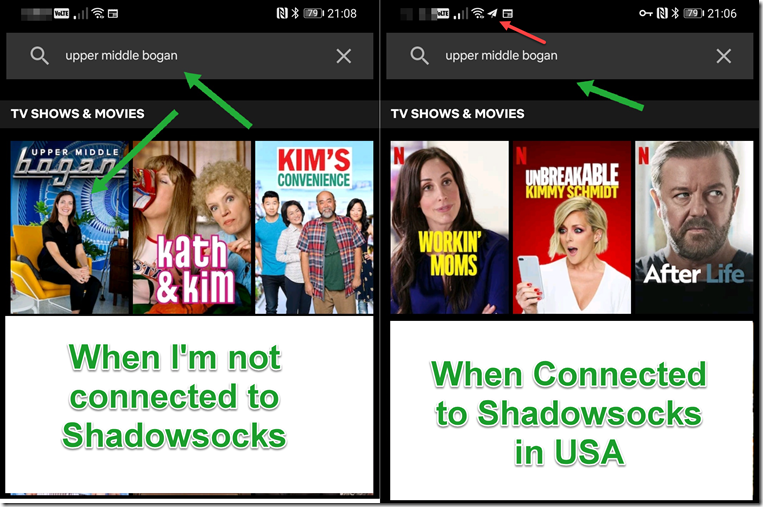

I also found something interesting when playing with Shadowsocks. Based on my testing, I noticed some video streaming providers have different content for different regions. For example, since I’m based in Australia, I am able to watch an Aussie comedy called Upper Middle Bogan on Netflix. but if I connect my phone to my Shadowsocks instance in USA, I am not able to find the show on Netflix:

Having my dedicated proxy servers across different part of the world definitely have its use cases beyond bypassing internet censorship.

I hope you find this post useful. feel free to reach out to me via Social media or GitHub.

Leave a comment